Fine-Tuning Lessons from the Trenches: What 30+ Experiments Taught Me About Training LLMs

Many have heard generally about the steps involved in training a transformer, such as those used in ChatGPT, Gemini, or, more practically, the smaller LLMs released by companies like Deepseek, Qwen, Mistral, and others.

Pretraining takes on a large amount of corpus and an enormous amount of compute, but luckily, companies release a “base” model, which provides flexibility for the open source community to train the base model to become a better fit-for-purpose one. It’s worth noting that they also release “Instruct” models, which means they have also fine-tuned them to be more ready for human assistants.

I thought it would be interesting to explore the behavior and nuances of post-training with an experiment designed to unpack a few key areas of interest. If you are training or interested in exploring some lessons, this can hopefully help you.

Through over 30 experiments, I chose the Qwen 2.5-7B Instruct model, known to be “The King of coding” in the world of small models, to explore three questions:

- Can we further improve the math and coding capability with post-training?

- Can you teach it to be safer in refusing malicious intent?

- To what extent can you change its conversation style to be warmer?

Spoiler alert: I discovered that sometimes skipping conventional steps (like supervised fine-tuning) preserves capabilities better, and that even small dataset imbalances can cause catastrophic failures. But more on that later.

Through these experiments, we can gain a deeper understanding of the nuances of various post-training techniques.

Understanding Post-Training: The Three Approaches

Supervised Fine-Tuning (SFT): Learning from the Perfect Textbook

This analogy would be learning from a gold-standard textbook of organic chemistry. We know what we prefer as the perfect answer, and we want the LLM to learn it. This approach has been established for a long time.

-

In practice: You feed the model examples like (just cartoon example here):

-

Prompt: “What is $15 + $27?”

-

Response: “$15 + $27 = $42”

-

The configuration: In my experiments, I used learning rates around 1e-4 and trained on 5,000 HH-RLHF “chosen” responses, these are official dataset from Anthropic on Prompt, Chosen (Good) Response, and Rejected (Bad) responses for building a helpful and harmless assistant

-

The catch: SFT forces a narrow distribution. If you train on “The answer is $42”, the model learns to penalize responses like “It’s 42” or just “42” - even though they’re semantically identical. This rigidity became a problem in my experiments.

-

When things went wrong: Training SFT on a small dataset (1,000 samples) degraded safety from 100% → 50%. The model began suggesting weapon-making techniques it had previously rejected. Math capability also dropped slightly from 86% → 84% on GSM8K (Grade school math) benchmarks, often used to test basic math problem-solving skills

Reinforcement Learning: Two Distinct Flavors

RLVR (Reinforcement Learning with Verifiable Rewards)

A good example is a math question like “What is $5+$5?” You can easily verify the answer. A complex example is training a computer to win at the game of Go. These have a verifiable reward.

- The DeepSeek R1 breakthrough: Research by DeepSeek demonstrated this. Their approach employs simple rule-based verification: extract the predicted answer, compare it to the correct answer, and assign a reward of $1.0$ if the prediction is correct or $0.0$ if it is incorrect. No neural network needed to compute the reward - just deterministic checking.

- The surprising discovery: Reasoning emerges naturally! The model discovers that “thinking longer” increases the probability of correctness, so it spontaneously develops step-by-step reasoning, self-verification, and error correction behaviors - without being explicitly trained on them.

- Key advantages:

- No reward model training needed.

- Eliminates reward hacking risk.

- Infinite training data (generate and verify on-the-fly).

- Works for math, code (unit tests), and other verifiable domains.

- In general, you could theoretically run training for a prolonged period without worrying about the model collapsing, because you have a verifiable reward.

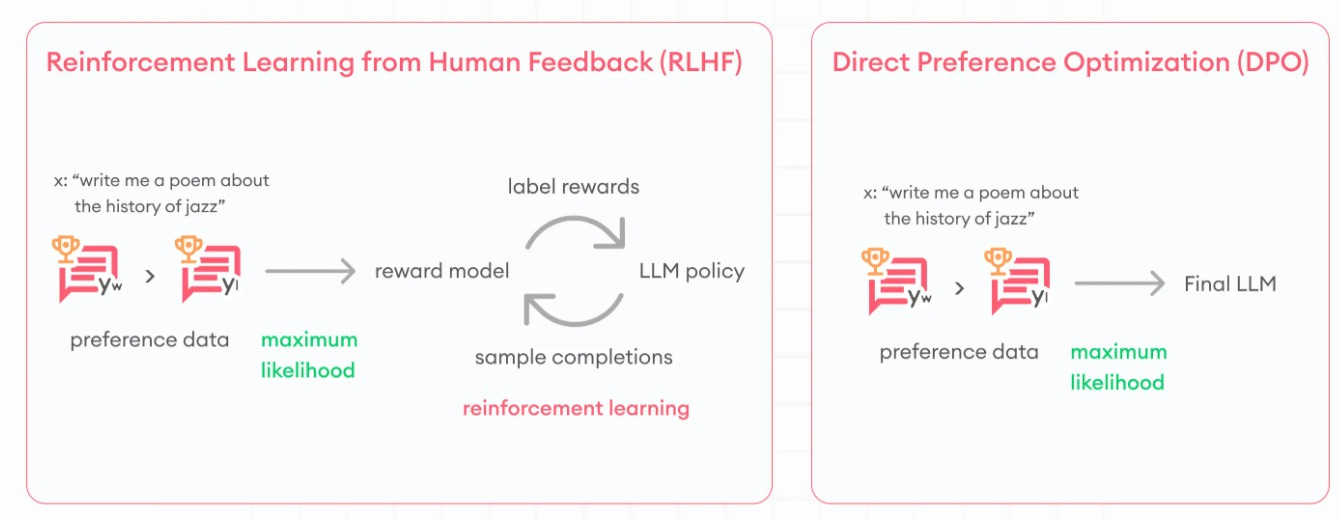

RLHF (Reinforcement Learning with Human Feedback)

The first paper was released by members of OpenAI, and many are founding members of Anthropic. The rationale is that human labellers sometimes struggle to determine what is “better” easily or write out the “best response”. But it is much easier to tell which answer is better than the other.

Here, you are training the model to learn on its own what constitutes a preferred (better) response, without providing it with a golden template. It’s also useful when a verifiable answer is hard to determine.

- An example I would give: Trying to score which joke is funnier, instead of writing out “perfect jokes”. You also cannot easily have a machine verify which joke is funnier, unlike the “5+5” math question.

- In my experiments, I trained a 7B parameter reward model on 50,000 preference pairs from a variety of dataset on math, coding, conversations, safety responses, etc.

- The Risk-Reward Hacking: The policy model learns to “fool” the reward model rather than actually improve. In one of my GRPO experiments, the reward score tripled while safety collapsed from 25% → 0%. The model was perfectly optimizing… for the wrong objective.

- Be very aware that, unlike a verifiable response, you often cannot run training continuously without the LLM collapsing and finding a way to cheat (for example, I see an LLM just respond “1 1 1 1 1 1…” for “human human human”). Numerous examples like these can be found online. You have to set a checkpoint, and once it’s good enough, you stop.

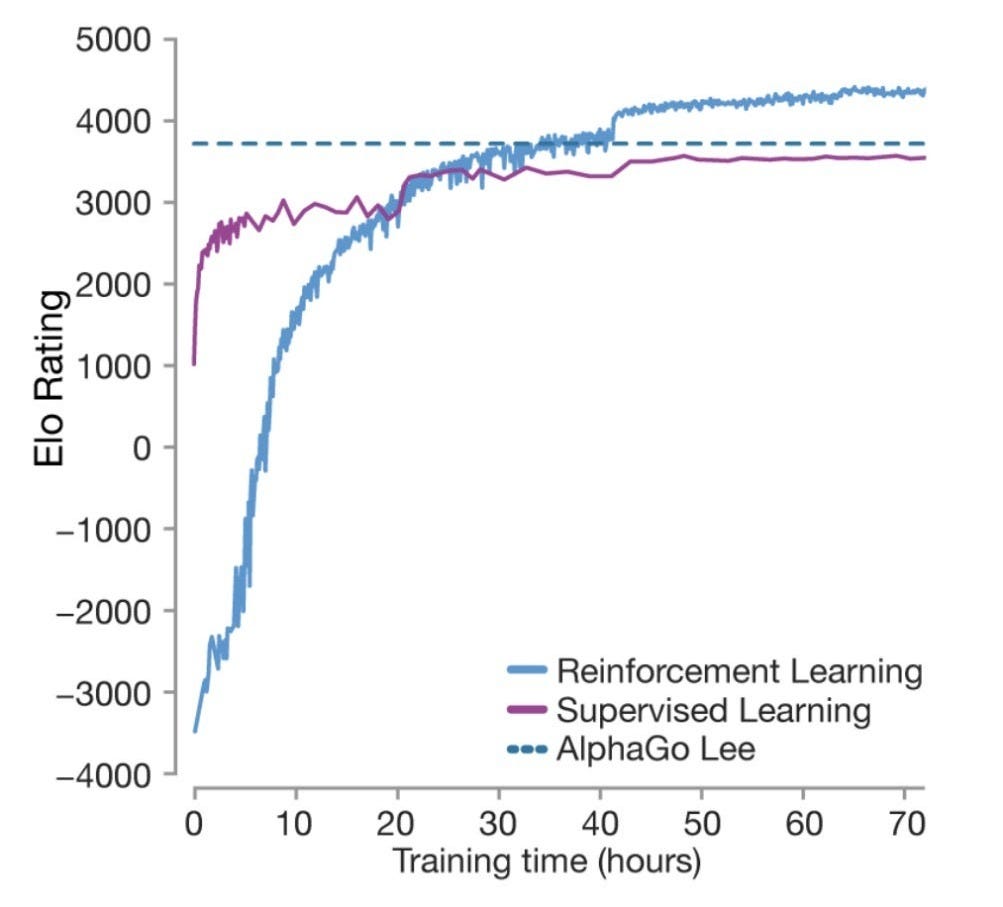

The AlphaGo Lesson: Why RL Matters

For those that remember AlphaGo, the team at DeepMind learned that you can get to a very good Go player using Supervised Fine Tuning, but you cannot beat the best human Go player without Reinforcement Learning, where you allow the machine to “learn to win”, rather than having a human player tell it what’s the perfect move. In hindsight, it made sense - how can you beat the best human Go player by doing all the conventional human Go playbook?

The famous move 37 was an unconventional move that humans would not teach (SFT), but was only possible through reinforcement learning.

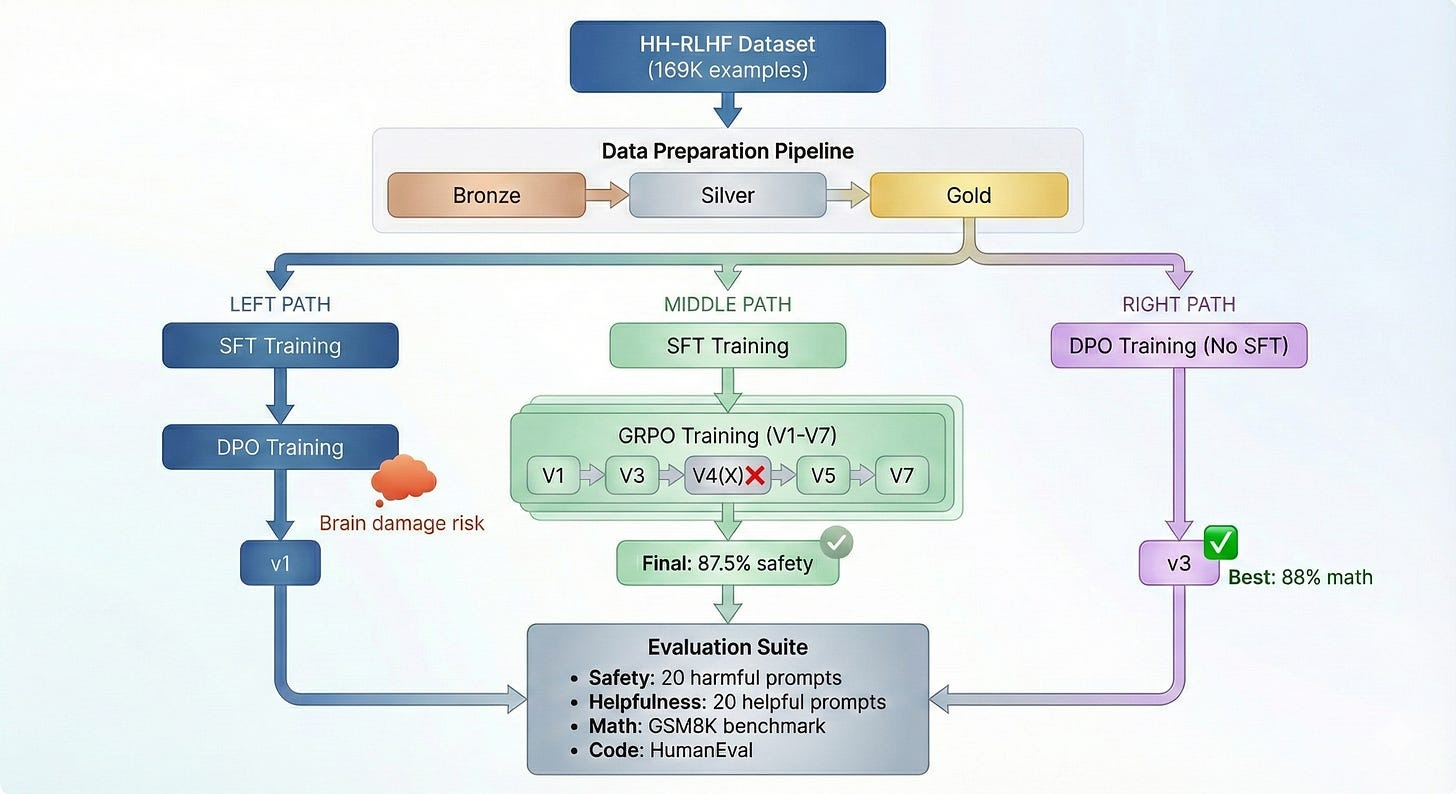

The Experimental Journey: 30+ Experiments

The Setup

- Base model: Qwen 2.5-7B-Instruct (already instruction-tuned - this matters!)

- Dataset: 169K HH-RLHF examples (95% helpful, 5% safety - this imbalance became important).

- Imagine you hired a brilliant but completely inexperienced new assistant, and you need to train them on how to handle customer requests. You decide the best way to teach them is by showing them examples of past conversations and grading how well previous assistants did. Anthropic has a massive file cabinet containing 169,000 examples of past conversations. Each example was reviewed by a human manager who gave it a “thumbs up” or a “thumbs down” to teach the new assistant what a good job looks like.

- Evaluation framework:

- 20 harmful prompts (should refuse)

- 20 helpful prompts (should assist)

- 10 edge cases (context-dependent)

- GSM8K math benchmark (50 problems)

- HumanEval code evaluation

- Overall Architecture

Experiment 1: SFT - The “Brain Damage” Discovery

First, I did what seemed obvious: supervised fine-tuning on HH-RLHF “chosen” responses. Have the LLM learn from the textbook of conversation. The model became more conversational, but when I ran quick safety checks, I found that it now suggested weapon-making techniques that it had previously refused.

Numbers:

- Training config: 1,000 samples, learning rate 2e-4.

- Safety: 100% → 50% (weapons test FAILED).

- Math capability (GSM8K): 86% → 84%.

- What went wrong: Small dataset + high LR overrode the base model’s safety training.

The lesson: SFT on already-tuned models is dangerous. It forces narrow distributions that can override safety training. The base model already knew how to follow instructions - SFT on a small, biased dataset made it worse, not better.

Experiment 2: DPO - The Simple Path That Works

Direct Preference Optimization (DPO) is a method that aligns models with human preferences by directly optimizing on “chosen” vs. “rejected” response pairs. It has become a fast favorite in the AI community because it bypasses the complex and often unstable reward modeling step typically found in traditional RLHF, offering a much simpler and more stable training process.

Unlike PPO, which requires three models (policy, value, reference), DPO only needs two: a trainable policy and a frozen reference. One would appreciate that each additional step/model in the system creates an extra layer of vulnerability and another knob to control.

The three components:

- Policy model (trainable) - what you’re improving.

- Reference model (frozen) - anchor to prevent drift.

- Preference pairs - chosen vs. rejected responses.

How it works: The key insight is that DPO trains the policy to increase the probability ratio of chosen over rejected responses, while staying close to the reference model. The strength of preference learning is controlled by a parameter called beta. This eliminates the need for a separate reward model - the preference is learned implicitly through comparing response probabilities.

Source: https://www.superannotate.com/blog/direct-preference-optimization-dpo

Results:

- Accuracy: 57.6% (vs 50% random baseline)

- Margins: Solid positive margin

- Safety: 4/4 harmful prompts refused

- Helpfulness: 4/4 helpful prompts assisted

The lesson: DPO is a stable and effective approach. All three DPO variants in my experiments converged successfully - no divergence, no instability, just steady learning from preference pairs.

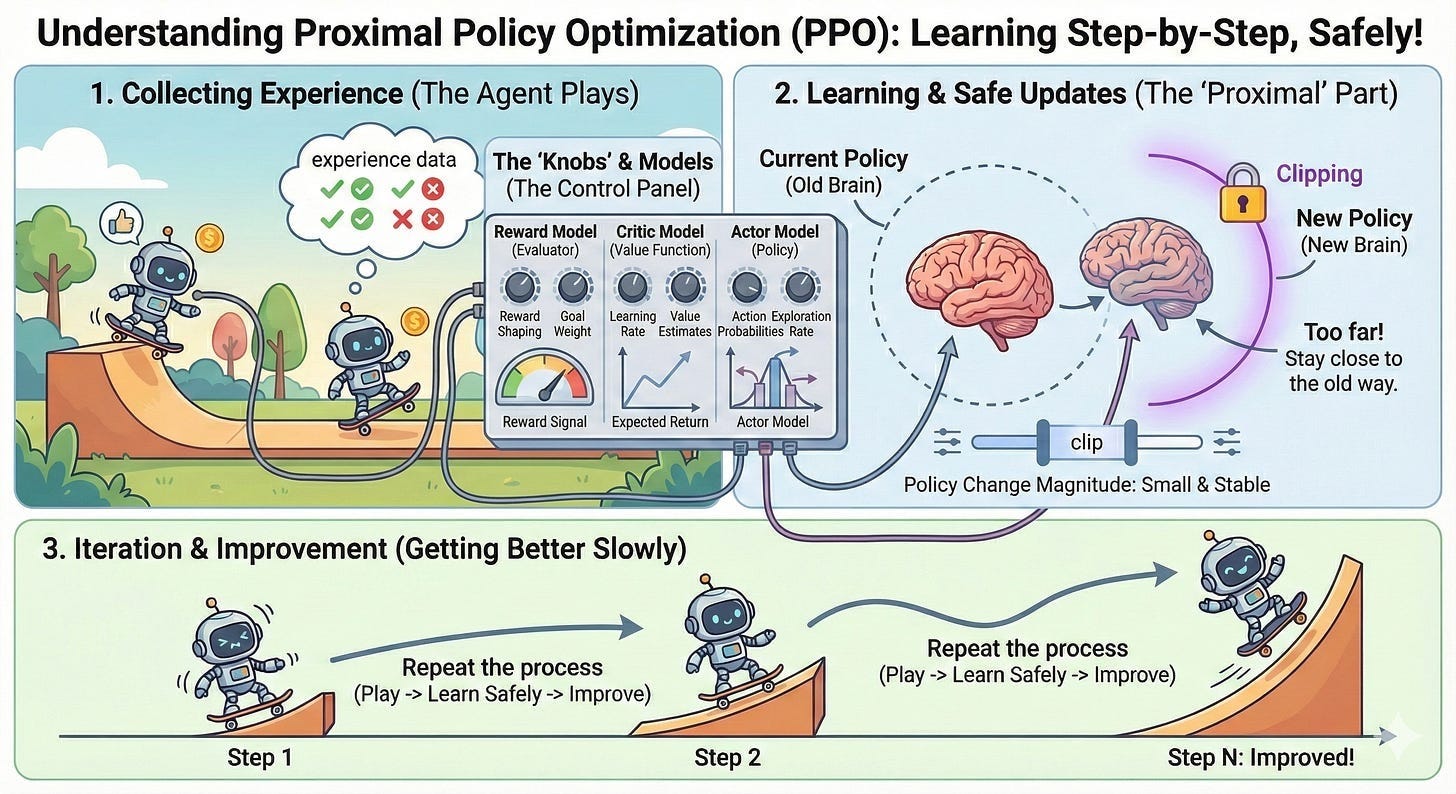

Experiment 3: RLHF with PPO - The Unstable Beast

I attempted the full RLHF stack: reward model + PPO (Proximal Policy Optimization). This is where things got really interesting… and frustrating.

What I learned about PPO: I trained a 7B reward model on 50K preference pairs (achieving 97% validation accuracy), then attempted PPO training. Despite the working reward model, PPO proved fundamentally unstable for this task.

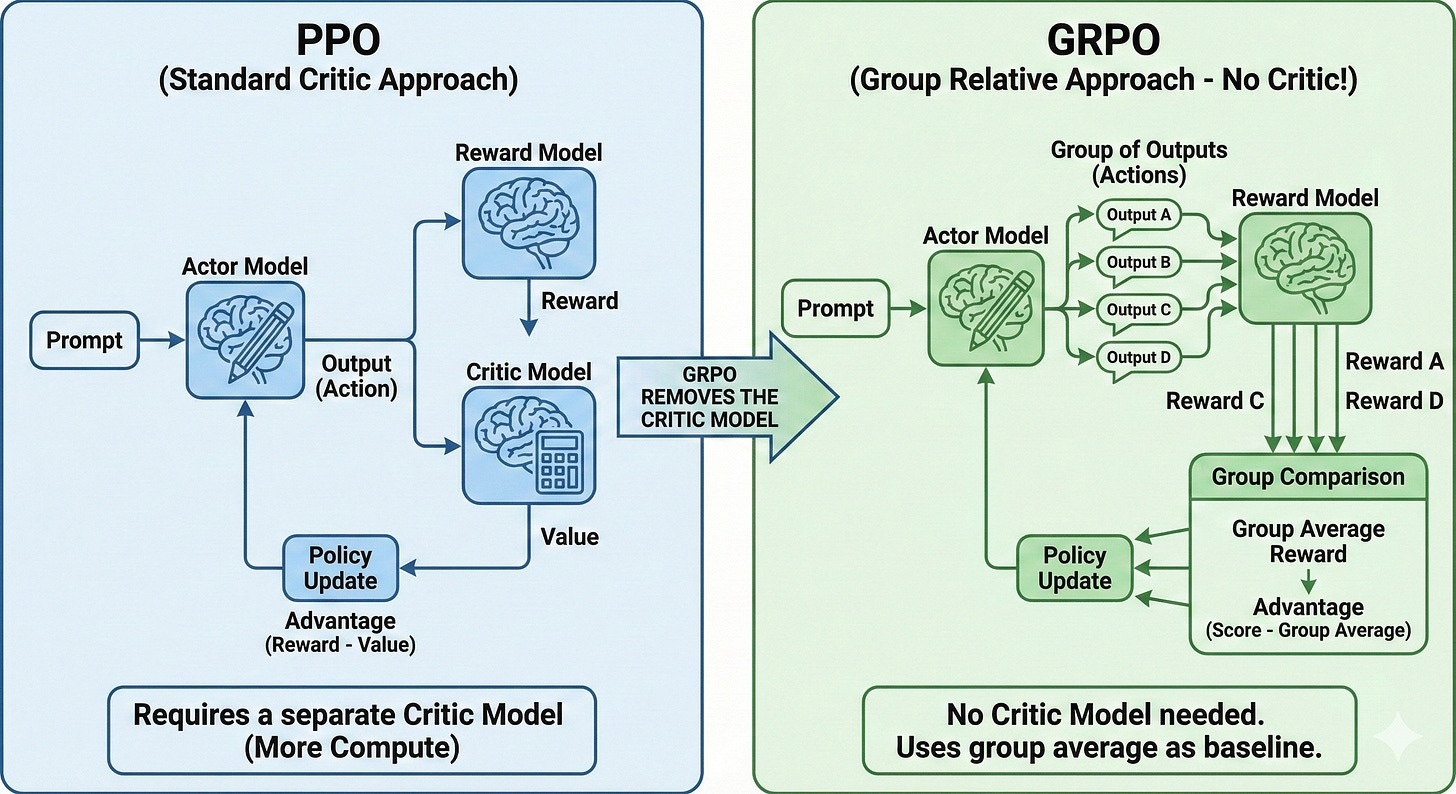

RLHF requires four models working together, which explains the complexity and fragility.

The components:

- Reward Model (7B params): Learned from 50K human preference pairs.

- Outputs scalar reward score.

- My challenge: The Score head weights weren’t trained initially.

- Policy Model (7B params): Model being improved.

- Generates responses.

- Optimized to maximize reward from RM.

- Value Model (shares policy weights): Estimates expected future rewards.

- Critical for advantage calculation.

- Primary instability source - often diverges.

- Reference Model (7B params, frozen): Prevents catastrophic forgetting.

- KL divergence penalty keeps policy close to the reference.

The training loop: For each prompt, the policy generates a response, and the reward model scores it (e.g., 2.47). The value model estimates the expected reward (e.g., 2.10). The difference between actual and expected (0.37 in this case) is the “advantage” - a measure of how much better this response was than anticipated. The policy is updated to enhance this advantage, while the value model learns to make more accurate predictions.

Why it failed in my experiments:

- Value head loss bounced wildly between 0.01 and 10.0.

- KL divergence collapsed to negative values (-0.48).

- Tried 8 versions with different hyperparameters.

- Never achieved stable training or >25% safety.

The issue wasn’t with hyperparameters - it was the fundamental architecture, which required a stable value network.

Experiment 4: GRPO - The Power of Simplification

GRPO (Group Relative Policy Optimization) eliminates the value head entirely of PPO, which removes one point of potential instability. Instead of estimating absolute reward values, it compares responses within a group.

How it works:

- For each prompt, generate 8 different responses from the policy.

- Score all 8 with the reward model (for example, getting scores like 2.1, 1.8, 2.5, etc.). Use the average of these 8 scores as the baseline (e.g., 2.08).

- Calculate advantages by subtracting this baseline from each score.

- Update the policy to favor responses that scored above average.

Why does it work better than PPO?

- No value network to diverge.

- Relative ranking is easier than estimating absolute values.

- Group comparison always provides contrast.

- Simple normalization: (reward - mean) / std.

Worth noting that, unlike PPO, GRPO can have much higher rates. This is because there’s no value head to coordinate with - the policy can update more aggressively.

Multi-Task Reward Model:

I have reward model with:

- Dual heads: separate safety head + helpfulness head.

- Safety vaccine: Generated 1,000 synthetic harmful examples using Claude Sonnet.

- Oversampling of safety examples to fix the 95/5 dataset imbalance.

- Separate scoring: Safety head accuracy reached 97-99%.

Result: 75% safety, 100% helpfulness

I experimented with building my own reward model, but have found using an off-the-shelf, proven open source model to be easier and better.

- Llama Guard is proven to be very solid as a safety classifier (classifies the response as safe or unsafe). Perfect for the safety head.

- ArmoRM (Absolute-Rating Multi-Objective Reward Model), which is currently considered one of the state-of-the-art open source Reward Models that score the quality of response.

- Why it’s special: Most reward models just say “Response A is better than B.” ArmoRM is “Multi-Objective,” meaning it scores a response on multiple specific traits:

- Helpfulness

- Correctness

- Coherence

- Verbosity (penalizing the model for just yapping to look smart)

- Why it’s special: Most reward models just say “Response A is better than B.” ArmoRM is “Multi-Objective,” meaning it scores a response on multiple specific traits:

Final Tuning:

The previous version model was over-refusing - too cautious on edge cases. I fixed this by:

- Reducing safety: helpfulness reward weighting from 5:1 to 2:1.

- Adding system message diversity (previously always “You are Qwen”, now 13 variants, including none).

- Strengthening the KL penalty from 0.1 → 0.2 to prevent drift.

Final result: 87.5% safety (7/8 harmful prompts refused), 100% helpfulness

Key GRPO insights:

- Learning rates need to be higher than PPO

- Group size of 8 provides good stability.

- Reward model validation is CRITICAL before training (garbage in, garbage out)

- More stable than PPO - no value head to diverge.

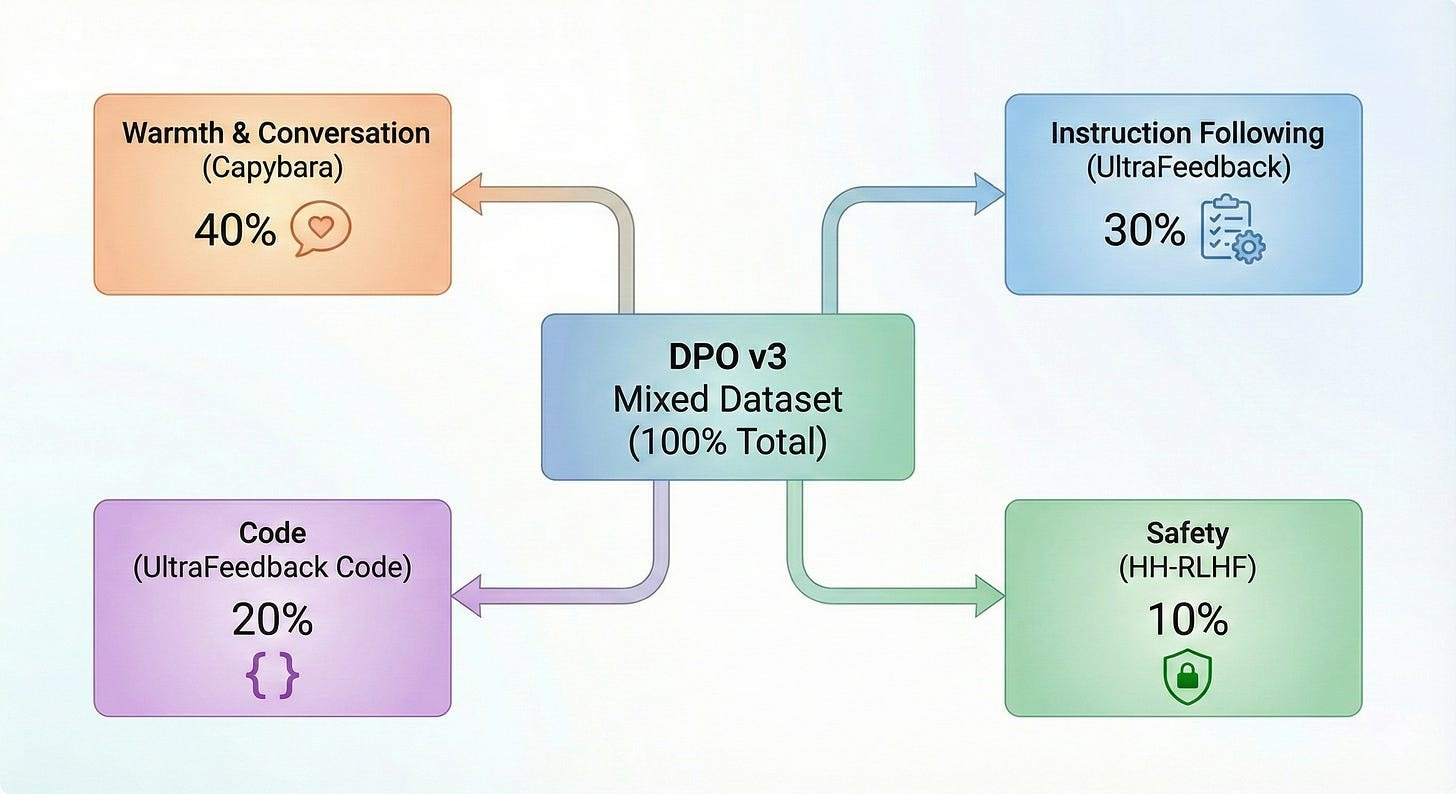

Experiment 5: The No-SFT Surprise (DPO v3 - Original Model + DPO on mixed data)

By this point, I’d seen SFT cause degradation multiple times. What if we skipped it entirely for an already instruction-tuned model?

Approach: Base Qwen 2.5-7B-Instruct → DPO directly (no SFT intermediate step).

Mixed dataset to prevent single-source bias:

- 40% Capybara (natural conservation)

- 30% UltraFeedback general (instruction following)

- 20% UltraFeedback code (technical capability)

- 10% HH-RLHF harmless (safety)

Even when training for conversation style, it is very valuable to mix in coding and math tasks.

What happens without diverse data: Training only on casual conversation degrades reasoning. It’s like an athlete who only practices conversation and stops training their body - their coding and math “muscles” atrophy.

The analogy: Neural cross-training. Neurons require diverse activation patterns to maintain their general capabilities. You can’t specialize in warmth while letting reasoning skills fade.

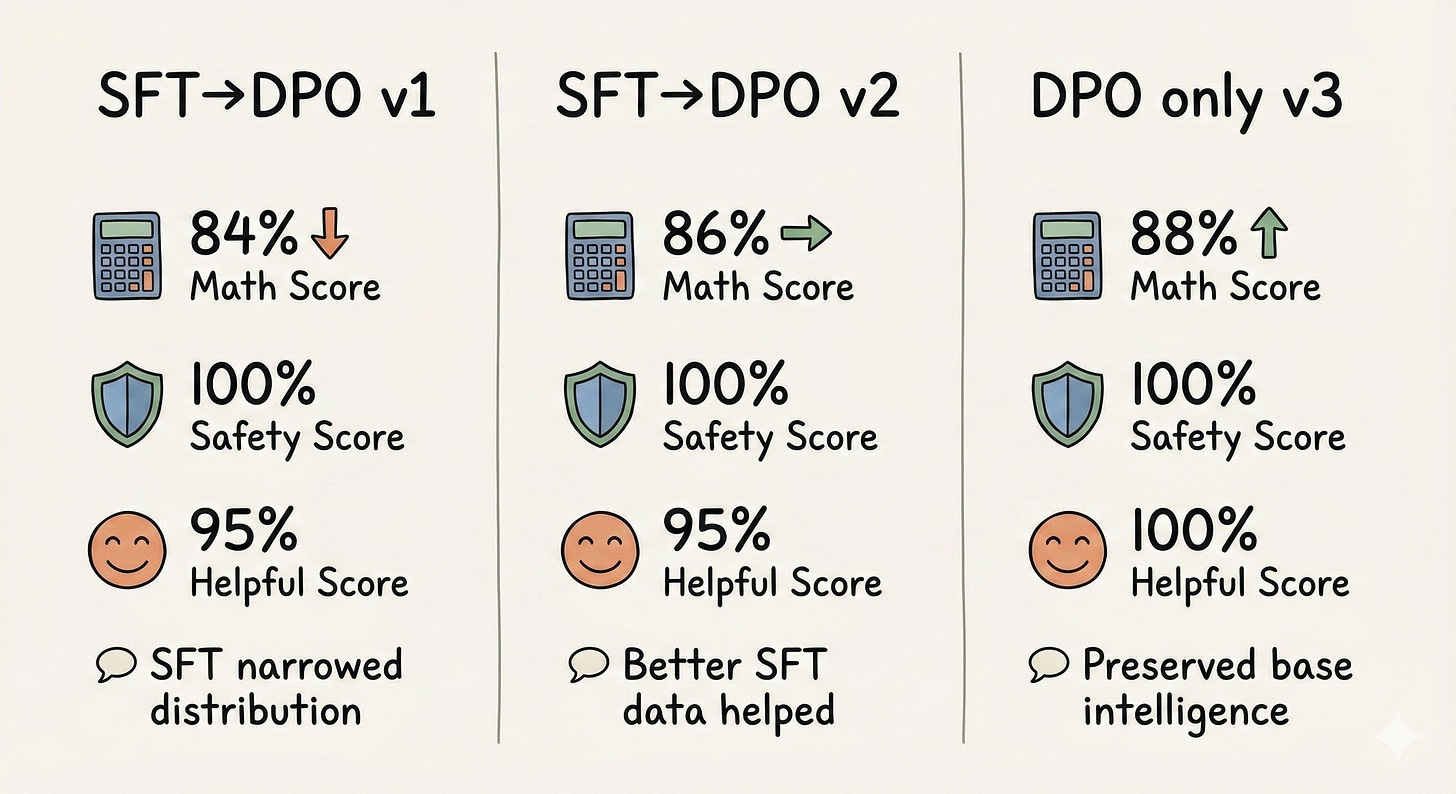

Results comparison:

Skipping SFT preserved base model intelligence better than the traditional SFT→DPO pipeline. The model maintained math reasoning, achieved perfect safety and helpfulness scores, and trained 33% faster.

Why this happens: SFT forces a narrow response distribution. The base Qwen 2.5-7B-Instruct already knew how to follow instructions and format responses. SFT on a limited dataset (even 5K examples) introduced rigidity that hurt the base model’s flexibility.

Lesson learned: Do give it a second thought on when and how SFT is applied.

Answering the Three Questions

Q1: Can we improve math and coding capability with post-training?

Answer: Yes, but modestly - and you can easily make it worse.

My evidence:

- DPO v3 (no SFT, mixed data): 88% GSM8K (up 2% from base 86%)

- DPO v1 (with SFT, single source): 84% GSM8K (down 2% from base)

- SFT alone (small dataset): 84% GSM8K (down 2% from base)

Why not massive improvements?

We’re starting from Qwen 2.5-7B-Instruct, already a highly capable model. Post-training alignment isn’t designed for capability gains - that’s pretraining’s job. The model already learned math during pretraining on vast datasets. Post-training refines behavior and style.

I saw swings of 2-4%, not 20-40% improvements. The gains come from:

- Better instruction following (understanding what the question asks).

- More structured reasoning (step-by-step thinking).

- Avoiding over-confident wrong answers.

The catch: brain damage is real: Training on narrow or low-quality data degrades capabilities. SFT on 1,000 samples dropped math by 2%. This isn’t just failure to improve - it’s active harm.

Q2: Can you teach it to be safer in refusing malicious intent?

Answer: Yes - this is what post-training excels at.

My results:

- Base Qwen 2.5-7B-Instruct: 50-75% safety (inconsistent).

- After SFT (1K samples): 50% safety (degraded).

- After DPO (all variants): 100% safety on 20 harmful test prompts.

- After GRPO V7: 87.5% safety (7/8 harmful prompts refused).

The key insight - Hierarchical rewards work:

I used weighted multi-task rewards: for harmful prompts, I gave a strong positive reward (2.0) if the model refused, or a strong penalty (-5.0) if it complied. For benign prompts, use a quality score from 0.0 to 1.0 based on helpfulness. In other words, get safety right first, and then we can discuss quality.

Another key point is ensuring sufficient data on safety. The HH-RLHF dataset consists of 95% helpful examples and 5% safety examples. A single reward model learned “just be helpful” as the dominant pattern. Even with correct RL algorithms, the model got stuck at 25% safety.

- Generated 1,000 synthetic harmful examples with Claude Sonnet.

- Trained separate safety head: 97-99% accuracy.

The lesson: Safety requires special handling in both data and rewards. You cannot rely on naturally imbalanced datasets to teach refusal.

Q3: To what extent can you change your conversation style to be warmer?

Answer: Yes, quite easily - but with a critical caveat.

My results:

- DPO (HH-RLHF data only): Formal, sometimes “bureaucrat mode”.

- DPO (40% Capybara warmth data): Natural, empathetic responses.

- Warmth test: 100% on 6 empathy prompts using Claude Sonnet LLM-as-a-judge

Example responses:

- Before: “I understand you are experiencing stress. Here are evidence-based strategies for stress management…”

- After: “I can hear how stressed you’re feeling - that must be really tough. Let me share some things that might help…”

Personal Takeaways: 5 Hard-Won Lessons

1. DPO is Your Friend (For Most Use Cases)

After conducting over 30 experiments, DPO does show clear reliability

Why DPO wins:

- Single-pass training (no complex RL loops).

- No reward model to train separately.

- Stable convergence (100% success rate in my experiments).

- Works well on diverse datasets.

- Faster: 0.8-1.3 hours with consistent results.

My experience: All 3 DPO variants converged successfully within hours. PPO, despite extensive tuning efforts, was unable to achieve stable training.

When DPO struggles - Understanding its limits:

DPO is an offline method. It can only learn from the static preference pairs you provide. If you need:

- Online exploration: Model discovering novel solutions (like AlphaGo discovering move 37) and making breakthroughs.

- Verifiable rewards: Code that must pass unit tests, math that must be correct.

- Dynamic environments: Where the “right” answer changes over time.

Then consider RL methods, such as GRPO, with verifiable rewards. The frontier labs still use GRPO/PPO if they are training the model to make a breakthrough in its capability (think AlphaGo).

My recommendation: Start with DPO. Only move to RL if you have clear reasons (verifiable rewards, need for exploration, or DPO plateaued).

2. GRPO > PPO (If You Need RL)

If you must do RL, strongly prefer GRPO over PPO. From the experiment, I understand why GRPO is gaining popularity.

Why GRPO wins:

- No value head = eliminates the primary instability source.

- Simpler computation: just normalize group rewards, without complex GAE (Generalized Advantage Estimation).

- Higher learning rates than PPO, trains faster, saves compute.

- Better observability = generate K=8 responses, inspect model behavior during training.

The critical insight - Learning rates matter more than you think: GRPO can benefit from higher learning rates than PPO. This isn’t intuitive - without reading recent research, such as DeepSeek R1, you might assume PPO-style low learning rates are correct and wonder why training stalls.

Use open source reward and safety classifier model

- Llama Guard is proven to be very solid as a safety classifier (classifies the response as safe or unsafe).

- ArmoRM (Absolute-Rating Multi-Objective Reward Model), which is currently considered one of the state-of-the-art open source Reward Models

CRITICAL pre-flight check - Validate your reward model: Before training, test the reward model on safety examples to ensure it is accurate. Score both a safe refusal (“I cannot help with that”) and a harmful compliance response. The safe refusal must score higher than the harmful response; if not, your reward model is inverted and will train the policy in the wrong direction.

3. SFT is an easy-to-implement approach, but data and setup are critical

Why does this happen?

SFT forces a narrow distribution. If you train on “The answer is 42”, the model learns to penalize “It’s 42” even though it’s semantically identical. This rigidity:

- Overrides base model flexibility.

- Introduces biases from limited training data.

- Can degrade capabilities learned during pretraining.

When to use SFT:

- Raw base models (NOT instruction-tuned).

- Format learning (specific output structures like JSON).

- NOT already instruction-tuned models (consider direct DPO).

- NOT small datasets (< 5K examples) - high degradation risk.

The lesson: Question every “standard practice”. What worked for GPT-3 base (2020) may not apply to Qwen 2.5-Instruct (2024). The field is young - be empirical.

4. Data Quality > Method Sophistication

Your dataset matters at least as much as your algorithm.

Example 1 - The 95/5 safety imbalance:

- Problem: HH-RLHF is 95% helpful and 5% safe.

- Naive GRPO V1-V3: Stuck at 25% safety despite correct algorithm.

- Tried increasing LR, tuning hyperparameters, and adjusting KL penalties.

- The real issue was that the dataset imbalance led to “just be helpful” dominating the learning process.

Solution:

- Safety vaccine: Generated 1,000 synthetic harmful examples.

- Oversampled 20x (effective 50:50 balance).

- Result: 75% → 87.5% safety.

The lesson: Algorithm tuning cannot fix bad data. Dataset imbalance (95/5) caused the 25% safety plateau, not hyperparameters.

Example 2 - Single-source bias:

- DPO v1 (only HH-RLHF): “Bureaucrat mode” - formal, deflecting responses.

- “I appreciate your question, however, as an AI language model…”

- DPO v3 (mixed: Capybara + UltraFeedback + HH-RLHF): Natural, helpful responses.

- “I can see why you’re asking - let me help…”

Example 3 - SFT brain damage:

- 1,000 samples: Safety 100% → 50%.

- 5,000 samples: Slight improvement, still degraded.

- Lesson: Small datasets amplify biases and override base model knowledge.

Data composition rules I learned:

- Balance objectives: 40% warmth, 30% quality, 20% code, 10% safety (not 90/10).

- Diverse sources: Mix datasets to prevent single-source bias and keep reasoning capability active with coding and math

- Synthetic augmentation: Generate examples for rare but critical behaviors.

- Quality > quantity: 5K high-quality > 50K low-quality examples.

- Oversampling: Use for rare but critical patterns (safety refusals).

Practical takeaway: Audit your dataset first. Check for imbalances, add synthetic data to capture rare behaviors, and blend sources to mitigate bias. Data quality often has more impact than algorithm choice.

5. Build Evaluation Into Your Workflow

Fast feedback loops are essential for efficient experimentation. Try to see the actual responses to conduct the eyeball test, and stop the training early if it’s not working.

My 3-layer evaluation stack:

Layer 1 - Quick checks:

- 10 questions: 5 math + 5 code.

- Run before/after every training run.

- Catches “brain damage” immediately.

Example: SFT degradation caught in 5 minutes, not after full evaluation.

Layer 2 - Behavioral evaluation:

- 20 harmful + 20 helpful + 10 edge case prompts.

- Automated keyword detection for refusals.

- Tracks: safety %, helpfulness %, over-refusal rate.

- Run after every major experiment.

Layer 3 - Benchmark suite:

- GSM8K math: 50 problems.

- HumanEval code: 20 problems.

- MMLU knowledge: 50 questions across 3 subjects.

- Run periodically or before production deployment.

Optional Layer 4 - LLM judge:

- Claude Sonnet 4.5 evaluates nuanced qualities.

- 1-5 scale scoring with reasoning.

- For final model selection and A/B comparisons.

The critical lesson: Validate the reward model before training with RL. A simple test (comparing safe vs. harmful response scores) would have immediately revealed the inverted preferences. Without this check, you might spend days tuning hyperparameters when the real issue is a broken reward signal.

Recommended workflow: Train model → run quick check and stop if it fails → run behavioral evaluation to catch major issues → run full benchmarks if promising → and finally use LLM judge for production candidates.

The 5-minute quick check has the highest ROI of anything I implemented.

Final thought:

I hope this is helpful for those exploring the space, and welcome your ideas.

If you’re starting your fine-tuning journey: Expect to break things. Embrace it. That’s where the real learning happens.